Stockman Pod Extreme on Cruisemaster XT

Revising Cruisemaster XT Freestyle spring rates to suit the Stockman Pod Extreme.

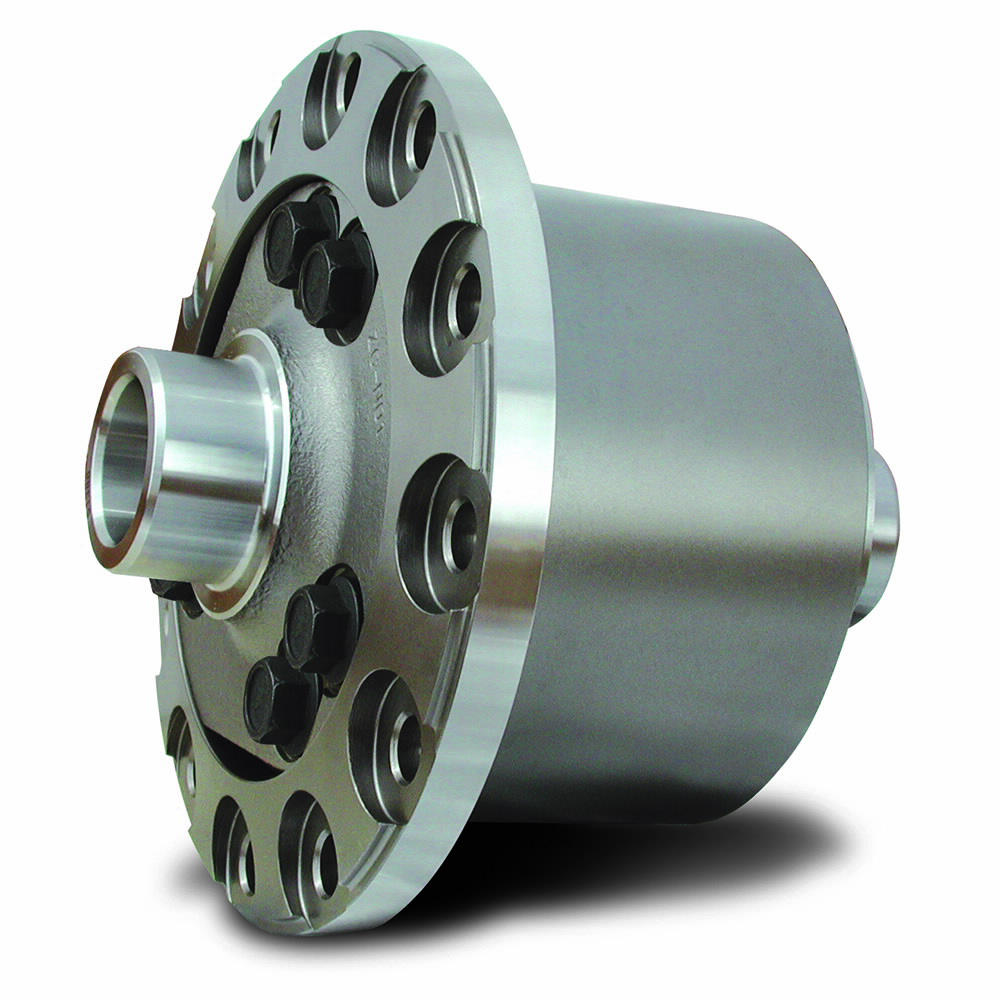

Cross-axle differential options for Jeep Wrangler JL

On selecting and installing an Eaton Truetrac Torsen differential into the rear axle of a Jeep Wrangler JL Rubicon.

Equipment Rack for Wrangler JL

Removable equipment rack, carrying electrical, water, refrigeration, food, and food preparation in a Jeep Wrangler JL.

MS BASIC Machine Monitor for RC2014

On writing a machine code monitor for 8085 and Z80 using Microsoft Basic.

Loading…

Something went wrong. Please refresh the page and/or try again.